Introduction

Theoretical Framework and Hypotheses Development

Technology acceptance model (TAM)

Hypothesis and model development

Research Methods

Questionnaire development

Pilot Study

Data collection

Measurement model and validity

Data analysis

Results

Measurement model assessment - Discriminant validity and cross loadings

Structural model assessment

Direct effect, indirect effect, and total effect

Discussion

Conclusions

Theoretical implications

Practical implications

Limitations and future research

Introduction

Emerging technologies, generative artificial intelligence (AI) technologies, remarkably generative AI chatbot systems, are increasingly being employed in government and private sectors to improve operational efficiency and service delivery to support long-term government management. Generative AI chatbot system is an advanced generative AI tool to support user engagement and expedite activities in a variety of fields. Also, generative AI chatbot systems were created to replicate human-like cognitive capacities, uses deep learning, machine learning, and natural language processing (NLP) to deliver context-aware, individualized, and effective support [1]. With the use of these current technology initiatives, agencies are able to manage distinctive numbers of external questions, offer anytime support, and improve user experiences by promptly responding to enquiries [2]. Integration of generative AI tools into government organizations will make perfect environment to meet citizens’ expectations of digital government and further generative AI transformations. AI is altering governments by improving decision-making processes, increasing consumer happiness, and reducing administrative workload. Governments employ AI technologies to evaluate massive amounts of data, discover trends, and decide on policies that will better serve the interests of the population [3]. This ability is very significant for resolving complicated public concerns since AI may disclose awareness that traditional analytical instruments are not capable. AI has a wide range of applications, from public safety and traffic management to healthcare, where it can speed up information sharing while also improving operational efficiency and reaction to citizen needs.

On the other hand, the integration of generative AI technological tools to government operations raises serious ethical, accountability, and transparency concerns [4]. Government agencies should navigate the challenges of ethically combining AI technologies while ensuring that these platforms uphold principles such as equity and accountability [5]. Using generative AI, governments can automate repeated queries, increase citizen interactions, and streamline internal personnel procedures [5]. However, when it comes to government organizations, implementing new generative AI systems can be quite challenging and expensive [6]. Many governments are unable to obtain the advantages that generative AI technology initiatives are intended to produce [7, 8]. Significant financial losses have resulted from poor technological implementations, particularly in the government sector, emphasizing the need to accurately predict organizational and user needs. Low rate of acceptance of information and communication technology (ICT) solutions, such as generative AI chatbot systems, remains a major impediment, restricting both the physical and intangible benefits, despite the potential for improvement [9]. The successful implementation of these technologies is strongly dependent on user perceptions. Positive user responses, which are frequently limited in their ability to judge technological viability, contribute to the improvement of generative AI systems. Gathering more information and projections can help improve the chances of a successful generative AI transformation in the public sector. Examining user attitudes and BI can help to assess level of acceptance of generative AI systems which can be integrated into public sector [10].

Moreover, generative AI chatbot systems have newly increased recognition in a range of industries, most notably in customer relationship service handling and public services in many countries. However, user acceptance of these systems has not been as high as expected [11]. This mismatch produces the question of why, even though the capacity of generative AI systems to speed up administering and improvement in service efficacy, users are resistant to use them [11]. Even though generative AI chatbot systems have advantages such as faster responses, lower operational costs, and more convenience at any time response, many consumers continue to use outdated, ineffective methods to communicate with governmental services. Therefore, understanding the reasons for this hesitation is required to improve generative AI system acceptance in the public sector initiatives.

There has been significant improvement in identifying user acceptance of new information technologies, especially with the help of the technology acceptance model (TAM) [12]. Therefore, TAM has been confirmed as a valid structure for assessing user adoption of emerging technologies, both theoretically and experimentally by Davis. To better understand technology acceptance, various experts have elaborated on TAM and presented extended models with additional factors. These updated models guide technical teams in optimizing system design and enable decision-makers to evaluate new technological services. Also, the TAM clearly demonstrates the importance of user behavior and willingness to accept new technologies. It provides a framework for determining the key factors that influence consumers’ decisions, such as perceived usefulness and usability. These insights boost the likelihood of a system’s successful implementation by supporting decision- makers in developing better strategies. TAM aids decision-making by providing understanding of user mindsets and behaviors, which can have a big impact on how well contemporary technologies are deployed in all sectors in a sustainable manner.

Sri Lanka’s generative AI transformation strategies energized with initiatives like the “National Digital Economy Strategy 2030”and investments in e-governance, fintech solutions, and smart infrastructure [13]. However, it remains a work in progress facing significant challenges. The country has initiated generative AI transformation projects such as the Lanka Government Network (LGN), 4G/5G connectivity, and generative AI-driven system innovations in sectors like healthcare and tourism. It will reflect a greater commitment to the acceptance of modern technologies for economic growth and public service enhancements. However, positive progress is hampered by limited digital literacy, an underdeveloped regulatory framework for emerging technologies like generative AI. At the same time, strengths to integrate digital solutions such as virtual assistants like generative AI chatbot, are ongoing, but issues such as data privacy concerns, economic instability, and institutional trust deficits continue to impede widespread acceptance. Lack of identification and understanding of user perceptions will continue the negative impacts on generative AI transformations in Sri Lanka.

Therefore, in this study, TAM has taken into consider as the base model to expand with new external constructs to have clear understanding on the acceptance of generative AI chatbot systems in Sri Lanka public service environment. Traditionally TAM has used to identify the user behavior and intentions on new technologies [14, 15], however, the unavailability of TAM extensions treat trust as a unidimensional construct in public sector generative AI solutions can be found as the main research gap of current available literature. Another researcher [16] highlighted trust’s role but do not extend it to AI or generative AI transformation broadly. This gap is critical given Sri Lanka’s push for generative AI-driven solutions. Different trust criteria are needed for public services because of their mandated use, policy ramifications, and bureaucratic complexity. Also, insufficient attention to legal/ regulatory support and user experience in shaping generative AI system acceptance and it is rarely integrated into TAM. Studies such as “Regulatory Impact Assessment in Sri Lanka” [17] address regulatory issues, however they do not address AI or digital transformation. Therefore, this study could fill the gap created by Sri Lanka’s lack of regulations specifically addressing generative AI system initiatives. Moreover, most TAM extensions are generic or based on environment of developed countries, ignoring the unique socio-cultural, economic, and technological factors in developing countries like Sri Lanka.

Primarily this study is focused on analyzing and understanding user trust and its relationships with other new constructs focused on generative AI systems in public organizations to enhance user acceptance in generative AI transformations for smart cities and smart governments. To accomplish this goal, the SEM approach is used, which is a statistical technique that enables the simultaneous examination of various relationships between unobservable latent variables. This approach is useful for investigating how the elements described above influence user intentions to utilize generative AI chatbot systems in public services. This study intends to provide a novel model that can aid government ICT policymakers in designing sustainable technology acceptance plans for successful public service delivery by focusing on how user trust and other constructs influence generative AI system acceptance in government AI transformations for smart cities and smart governments.

Theoretical Framework and Hypotheses Development

The acceptability of generative AI systems is challenged by its architecture, and the TAM model itself cannot be a full instrument for this. TAM must be combined with other key elements to lead the plan towards a current model that is suitable with generative AI technology. The purpose of this study is to develop and compile a complete list of customer behavior drivers of long-term generative AI technology acceptance to assess their influence. The client can select whether to make the necessary interventions to maximize the efficient use of the newly introduced technology. However, the Generative AI chatbot system is very new and has complicated acceptance and development in government organizations. Three identified external constructs (trust (TR), legal/ regulatory support (LR), and user experience (EX)) may play a direct and indirect influence in the long-term adoption of generative AI chatbot systems, particularly in public sector organizations in Sri Lanka.

Technology acceptance model (TAM)

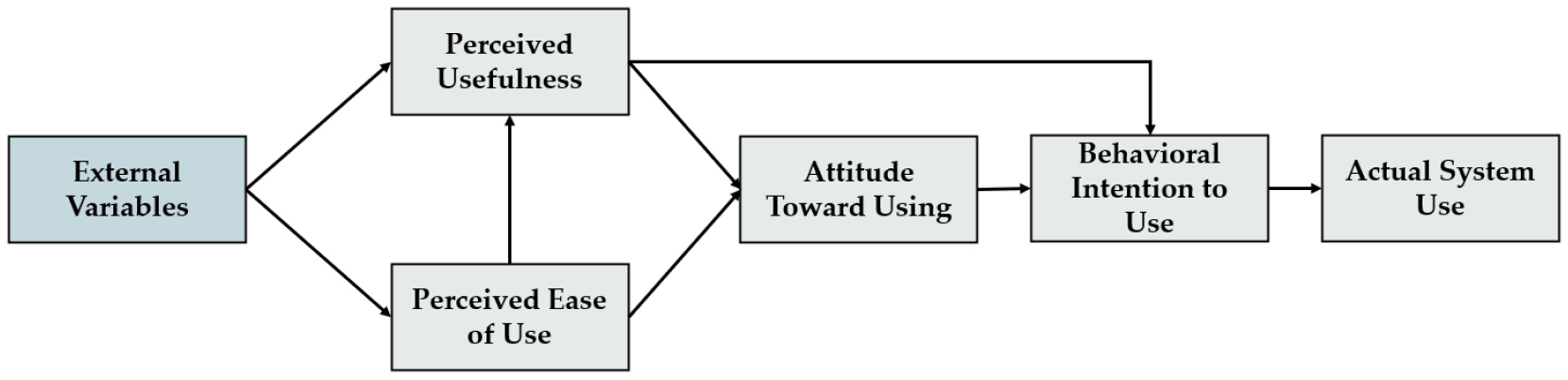

The TAM (Figure 1) has been used in study to discover the user acceptance level of new e-technology or new e-services [18, 19]. According to Ajzen and Fishbein’s theory of reasoned action (TRA), TAM is one of the most successful and reliable contributions. TAM [18, 19] is the most extensively applied model to investigate the user perceptions on acceptance and usage of revolutionized technologies.

Users’ perceptions about the actual system use of new technology were found to be related to their attitude to use and BI to use the specific technology. PU demonstrates a more appropriate association with utilization than the other model variables. As a result, this study is determined to integrate PU and PE into a new research model. PU is described as the extent to which a user believes that accepting a particular system will improve his work performance. PE is the intensity to which a user believes that employing a specific system will be effortless and easily adopted.

Hypothesis and model development

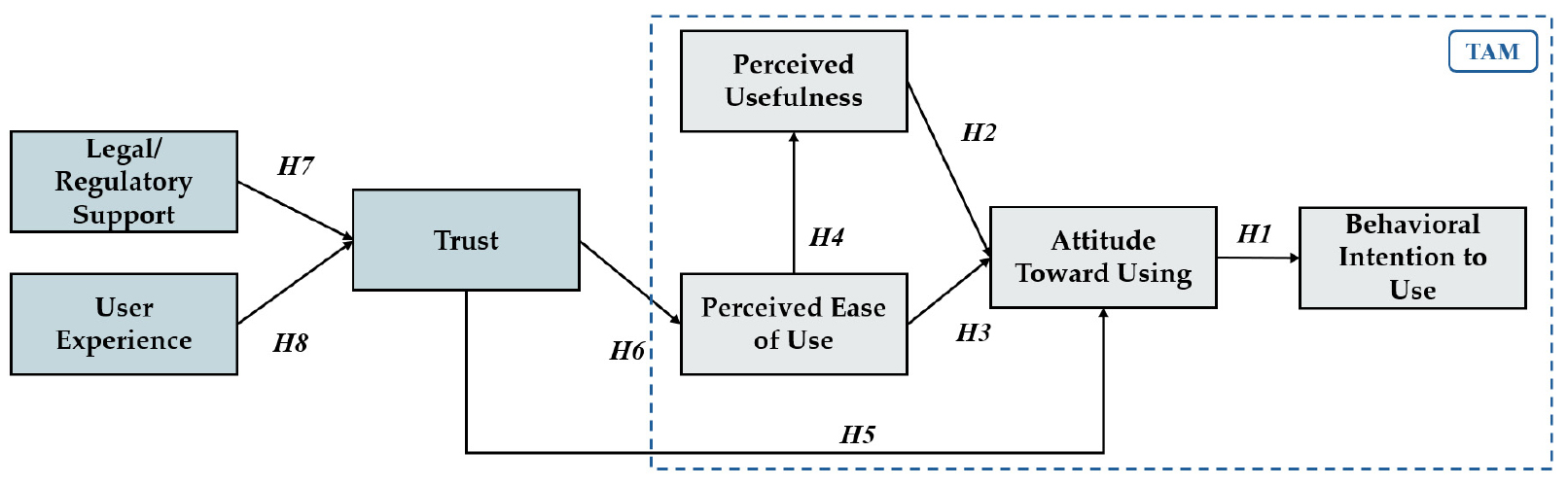

According to the confirmed literature, the TAM offers good results for calculating the BI to accept the new technology [14, 20, 21, 22]. However, there is an absence of a TAM to get good outcomes that value the acceptance of the most recent modern technologies, therefore the development of this augmented TAM is essential to achieve this approach. Consequently, trust, legal/ regulatory support, and user experience are added as external constructs to TAM to better explain generative AI chatbot acceptance in public service. Trust is crucial because citizens believe that generative AI chatbot systems and the institutions behind them are reliable, ethical, and act in their best interest, especially when dealing with sensitive public services. Legal/ regulatory support is required because clear laws and regulations help to reduce citizens’ fears about privacy, security, and accountability. Also, it will enhance user trust, leading to acceptance and use generative AI technologies. User experience is included because of citizens’ direct interaction with generative AI chatbot systems can be explained from citizens’ vision on easiness, helpfulness, and how strongly they affect their perception of usefulness and ease of use in generative AI technology acceptance. These aspects are evaluated by a vast number of AI professionals, as well as the technology’s peculiarities and distinctive structure. New structures have been developed to account for the changes and developments in the usage of generative AI in public services during the past few years. This paper will present a new research model into two parts and explain it in detail with each hypothesis: first, the TAM constructs (behavioral intention (BI), attitude, perceived usefulness (PU), perceived ease of use (PE)); second, external constructs (TR, LR and EX).

TAM constructs

1) Behavioral intention (BI)

Behavioral psychology suggests that user behavior is influenced by intentionality, combined with the perceived likelihood or probability that users assign to involving in a specific action, such as adopting and experiencing new technology [23]. BI helps spot clear signs of user approval early in the system development process. It also helps clients accept useful innovations or turn down flawed and risky ones, lowering the chance of offering poor quality technology before it is rejected [18, 24]. A research study endorsed that the PU of a technology directly influences users’ BI to use it [25]. Users are more likely to start using new technology if they see it as helpful. BI is about a user’s personal reasons for trying out a new system. The reason for using it comes from what the user wants to achieve [26]. However, another author found that a person’s sense of right and wrong works as a unique factor in shaping their BI [27]. In a morality situation, the user might affect behavioral intentions based on attitude value [28]. Another study suggested that habit predicts classroom behavior better than intentions. But, later findings showed that perception and intentions matter more when habits are not the main factor [29]. When past behavior was factored in, the link between attitude and intention got weaker. Likewise, past behavior lessened how much intentions shaped actual behavior [30]. There’s proof that many factors are connected to behavior, and the link between thinking and actions was found to be more random than based on careful reasoning [31]. Another author pointed out that more research is needed to look at PU and PE from different perspectives to figure out how outside factors affect these inner behavioral results [18].

Mainly the model is focused on understanding intentionality in behavior. As mentioned before, various studies have found signs of attitude and measured how much they connect to behavior. A smarter way forward would be to focus on assessing and predicting behavior, which could reveal the key factors and traits that influence why consumers intend to use generative AI technology in today’s systems. BI in the TAM is the idea that shows how much someone plans to use a new technology, like a computer or app, before they start using it actually. Introduced by Davis [18], BI is a key part of TAM because it connects what people think about a technology, how useful (PU), how easy to use (PE) it is, and whether they will use it in the end. Some studies show that if people believe a technology helps them and is not hard to use, they are more likely to want to try it [18]. For example, research by Venkatesh and Davis [32] describes that BI strongly predicts actual use, making it a bridge between thoughts and actions. In short, BI is important in TAM because it tells us how ready someone is to accept and use a technology based on their feelings about it.

As part of the nation’s drive towards digitalization, BI measures how much Sri Lankans intend to use generative AI chatbot systems. It is a fundamental concept from the TAM that indicates whether someone intends to utilize AI chatbot for many public services. BI hinges on whether people believe generative AI chatbot is easy and helpful, as well as if they trust it and believe the regulations support it, in Sri Lanka, where some individuals have more access to technology than others.

2) Attitude (AT)

The term “attitude” indicates how a user feels about the new technology, both positively and negatively [18, 24]. The TRA [33] led researchers to uncover real user behavior. AT toward using and exploring things, like a modern tech system, is called the user belief system. When figuring out what they intend to do, people look at how they feel about each option they have. It looks like the process of choosing based on similar attitudes do not show how someone comes up with their thoughts about whether to do different tasks or not [34]. Thinking about users’ desires and what shapes their attitudes could change the outcome of their intended behavior. Also, combining standard beliefs and attitude values can be averaged out based on what they expect [35]. According to various studies, a person’s attitude can both affect and be affected by the attitudes of others. It has been assumed that how people influence each other socially is key to whether a new system gets accepted [36]. Moreover, using new technology can shift how people feel about it, which might definitely impact how organizations are set up, the way people communicate, and the places where work happens [37].

According another research study, a high degree of agreement between predictors and fixed criteria that, only when correct amount execution is used, will guarantee sound link between attitude and behavior [38]. As a result, the TAM model and further research study findings have validated the link and concerns between AT and BI. Similarly, People who feel good about generative AI chatbot system, and they are more likely, want to use it for digital government services. If they think it is helpful, they will plan to use it for public services. A positive AT makes both workers and citizens eager to try it out. When they believe it makes public services easier, they are more ready to depend on it. Overall, liking generative AI chatbot system pushes people to intend to use it in their daily public interactions. Therefore, it is hypothesized that:

Hypothesis 1 (H1): Attitude has a positive and significant impact on behavioral intention toward generative AI chatbot system acceptance in public services

3) Perceived usefulness (PU)

The degree to which a user believes that applying the new technology will enhance his or her individual performance is known as PU [18, 35]. This author also determined that the focused partial involvement could affect attitudes and beliefs of the user [18]. Intention has a significant influence on PU, whereas attitude has a limited association. This was clarified by his articles, which covered the problem of people who want to use useful technology despite having a negative attitude towards it. In theory, PU is a good factor; users are more inclined to support an application based on its operational capabilities and abilities rather than how easy or difficult the system is to use, which influences service acceptance [24]. This implies that the criteria of PU are related and influence the degree of use [39].

Several factors can influence PU, including environmental outliers, which have the potential to drastically impact consumer views. It is proposed that contextual factors such as perceived environmental uncertainty and decentralization will influence the PU of aggregated data. Certain discoveries explain the advantages of earlier PU integration in the areas like technological systems [40]. In other beliefs, another research study found that PU and enjoyment had a similar effect on the frequency and time of use, the effect of computer anxiety was accountable more on enjoyment than PU [41]. Since many factors of the system environment and PU had a major impact on PE for the new technological system, there was a direct impact on the modern information technology solutions, particularly on perceived ease of use [42].

PU plays a crucial role in shaping attitudes toward adopting generative AI chatbot systems in public services in the context of Sri Lanka’s generative AI transformations to build smart cities and smart government plans. As the country advances its e-government initiatives, generative AI based systems are expected to enhance service efficiency, reduce bureaucratic delays, and improve citizen engagement [43]. Dwivedi indicates that when users perceive modern systems as beneficial in reducing administrative burdens and providing timely responses, they are more likely to develop a positive attitude toward their acceptance [44].

Furthermore, Sri Lanka’s National Digital Economy Strategy 2030 [13] aims to influence generative AI to streamline public administration, emphasizing the necessity of user-friendly and efficient systems. However, given the relatively low generative AI systems acceptance rate in Sri Lankan public institutions, understanding the impact of PU on AT formation is essential for ensuring effective implementation. Therefore, assessing PU within Sri Lanka’s digital governance framework is critical to encouraging citizen and employee confidence in generative AI-powered public services. Therefore, it is hypothesized that:

Hypothesis 2 (H2): Perceived usefulness has a positive and significant impact on the attitude toward generative AI chatbot system acceptance in public services

4) Perceived ease of use (PE)

PE refers to people’s belief, confidence that using new technology will be simple and straightforward to them [24, 35]. PE is one of the TAM model’s key structural constructs. This construct has two direct positive impacts on the PU and AT [24]. Several research studies have supported and used TAM theory to estimate customer behavior with new technology acceptance [24, 35]. PE is the probability that system users will expect the desired new technological system to be effortless [45]. In construction, PE is the extent to which the user anticipates and believes that using a particular service or technical system will be simple [24].

Creating a new system that might be simple but when attempting to convey the emotions on, responsive, easy to use, easy to manage and aspirations of the users to the service providers, and its adaptability will become crucial challenging to achieving the objectives [46]. The interactions between some constructs may explain why utilization is not necessarily associated with usability. Importantly, it would not explain why usage might increase as PE decreases [39]. Another researchers [47] found that the consumers assume that playing online games is simple. In this regard, PE made less effort by using the information technology system.

Many researchers considered PE, when deciding whether to be approved by users. Furthermore, even though all but a few of these investigations received the expected results about PE, the TAM model was frequently applied in practice in this field [48]. The value of actual experience in discovering PE was verified by the outcomes of previous user acceptance tests that focused on actual use [40, 49, 50, 51, 52].

PE is a crucial determinant of user acceptance of AI chatbot systems in Sri Lanka’s generative AI transformation landscape. The systems that are sensitive and require minimum effort to operate are more likely to be adopted [43]. Government supported generative AI solutions must prioritize usability to encourage acceptance across diverse user groups since digital literacy levels vary among the different areas. If generative AI chatbot systems are designed with simple interfaces and seamless user experiences, public confidence and willingness to engage with these technologies will increase, thereby supporting the broader digital governance strategy.

Also, PE significantly impacts the PU of generative AI chatbot systems in Sri Lanka government. According to the TAM, when users find a system that is easy to operate, they are more likely to recognize its utility in enhancing productivity and service efficiency [18]. Since Sri Lanka government’s generative AI transformations are still progressing, a complex AI chatbot interface could slow down acceptance. Therefore, it must ensure that modern technological systems such as generative AI chatbot systems require minimal training and integrate seamlessly with existing e-government platforms can enhance their PE. This is particularly relevant as Sri Lanka continues to implement generative AI-driven public services, where ease of use can drive higher engagement and trust in the technology, ultimately facilitating its long-term sustainability. Therefore, it is hypothesized that:

Hypothesis 3 (H3): Perceived ease of use has a positive and significant impact on attitude toward generative AI chatbot system acceptance in public services

Hypothesis 4 (H4): Perceived ease of use has a positive and significant impact on perceived usefulness toward generative AI chatbot system acceptance in public services

External constructs

The following external constructs are adopted according to environmental and technology characteristics. Moreover, some research studies have confirmed these definitions and relationships are shown in Table 1.

Table 1.

Definitions for external constructs

| External Construct | General Definition | Source(s) |

| Trust | Trust involves the confidence that users place in technology, believing that it can perform its functions reliably and will act in the users’ best interest. | [53, 54, 55, 56] |

|

Legal/ regulatory support | Legal/ regulatory support encompasses the frameworks and guidelines established by authorities to ensure the ethical use and protection of technologies, including compliance standards | [57, 58] |

| Use experience | User experience encompasses all aspects of the end-user’s interaction with a product, emphasizing satisfaction, usability, accessibility, and the overall emotional response. | [59, 60, 61, 62, 63] |

1) Trust (TR)

Trust is the extent to which people have confidence, at ease, and secure when they use technology [60, 64]. TR, security, and privacy are some of the factors that either directly or indirectly inspire people to use technology [65]. A trusted system that adapts to the inescapable changes in trust can control how social interactions evolve over time. Individuals who have a positive attitude towards technology may be more trusting and secure than those who have negative feelings about it. When TR is taken into consideration, there are large partial relationships between risk and acceptance [66]. When customers’ confidence erodes, they are less likely to take risks and are better protected against the possibility of disloyalty. In the case of new technology-based applications, TR should be strong, while risk should be minimized. TR has a direct impact on both AT and the performance of the available technologies. TR is the primary focus of this study, and it has a large indirect effect on customer behavior. TR may influence the consumer’s selection of technology or even service [61].

TR can be identified as a fundamental factor influencing the acceptance of generative AI chatbot systems in Sri Lanka’s government sector. Also, TR in generative AI systems depends on transparency, reliability, and data security, especially when handling sensitive citizen information by government. A research suggests that when users trust generative AI-driven services, they are more likely to develop a positive attitude toward their acceptance [67]. Sri Lanka’s efforts to integrate generative AI into public services, such as AI chatbot systems for e-government portals, require strong mechanisms to build TR, including ethical generative AI policies and regulatory frameworks. Without adequate trust, even the most advanced generative AI chatbot systems may face challenges from both citizens and government employees, limiting their effectiveness in generative AI transformation initiatives.

TR also plays an important role in developing perceptions of a generative AI system’s ease of use, particularly in the stage of Sri Lanka’s generative AI transformations for smart cities and smart government plans. When users believe that a generative AI chatbot system operates transparently and without risks, they are more likely to perceive it as a user friendly and insightful initiative in line with the findings of [68] research. Establishing clear policies on generative AI accountability and user control can reinforce TR, making these systems more approachable and easier to use within public services. Therefore, it is hypothesized that:

Hypothesis 5 (H5): Trust has a positive and significant impact on attitude toward generative AI chatbot system acceptance in public services

Hypothesis 6 (H6): Trust has a positive and significant impact on perceived ease of use toward generative AI chatbot system acceptance in public services

2) Legal/ regulatory support (LR)

LR frameworks are laws and policies created by the government to keep an eye on and guarantee that technology users and service providers uphold their responsibilities and refrain from infractions. The protection of people’s privacy has become a crucial issue as the globe grows more interconnected due to digital technologies. In addition to examining the complex relationship between technology development and the fundamental right to privacy, the study looks at how the law is changing and how this affects people, companies, and governments [69]. LR from the government are crucial for e-government and generative AI transformation initiatives, service quality monitoring, approving new technologies, and implementing them across the country. Also agencies must negotiate a complicated web of LR while upholding moral norms and ideals in order to guarantee ethical and sustainable business activities [70]. The purpose of these laws is to guarantee that all procedures are carried out fairly and efficiently. They can be applied in generative AI chatbot systems in terms of how users behave when using these technologies. Regulation is required to prevent or lessen the ambiguity that results. The impact of PU, PE, perceived hazards, user experience, and privacy and security concerns on citizens’ intentions to accept and adopt generative AI is strengthened by government laws as a moderating variable [71]. However, there exist many problems with the expansion of generative AI systems, such as weak government LR and poor concern on implementing such regulations by government. Another research [72] established the principle that government regulations affect the use of technological innovations in two ways: first, by restricting taxation and other decisions, and second, by altering how technological innovations are implemented in the environment.

LR from the government have become increasingly crucial for the successful application of technical systems. Technology can advance quickly and significantly, which makes supportive LR more important. The acceptance of new technology may be aided and supported by the greater availability of technology and service providers in high-intensity nations. Furthermore, another research found that government LR must safeguard the interests of technology, particularly in light of the last ten years of customer annoyance over subpar technical services, which may be resolved by increased government LR [73].

Trust in generative AI chatbot systems within Sri Lanka’s government will be enforced with proper LR. In the absence of clear generative AI governance regulatory frameworks, concerns over data privacy, security, and algorithmic fairness can delay acceptance of such technological movements. Sri Lanka’s personal data protection act (PDPA) and emerging generative AI laws and regulations, aim to build a secure environment for generative AI acceptance in public services. These LR can mitigate risks related to data misuse and bias, reinforcing TR in generative AI chatbot systems among both public officials and citizens. Structured regulatory frameworks are needed to foster sustainable generative AI transformation in Sri Lanka. Based on the previous research outcomes, this study assumed that TR and LR were directly correlated. A lower degree of risk results from government LR, which has a major impact on consumers’ TR in new technologies. As the secure regulations for implementing generative AI technology in the public sector rise, it will build trust on such system and increase the amount of usage. Therefore, it is hypothesized that:

Hypothesis 7 (H7): Legal/ regulatory support has a positive and significant impact on trust toward generative AI chatbot system acceptance in public services

3) User experience (EX)

User experience is the degree to which a customer’s knowledge and expertise enable them to employ new technologies [51, 59]. A high degree of experience boosts client confidence in using the new information technology (IT) systems [47]. Experience has a significant impact on TR, according to numerous studies. This factor has a big influence and might go up or down based on the experience level of the person [60, 61, 62, 63].

Customers shall possess a certain amount of experience and knowledge in order to use any new technology [74]. The new technology’s usability may be impacted by a user’s aptitude and prior experiences, particularly during a brief training period that supports and shapes their control and usage of certain systems [35]. According to studies, users’ satisfaction and experience with new technology boosts interaction and usage. To quantify and evaluate experience as a continuous variable, Fazio and Zanna carried out a correlational analysis. Linear behavioral experience served as the basis for the attitude: the more likely an attitude was to predict future behavior, the more it influenced people’s experiences, the greater their range of rejection, and the more certain they were of their attitudes [75]. The industry experience may have a major impact on productivity gains and the high- performance advantages of computer applications [76]. The degree of technological experience has a significant influence on how new technology is perceived. Their trust, belief, and acceptance of the new system can be influenced by user experience, knowledge, and behavior, as can any technology system built on an innovative application [64]. It follows that prior experience with an IT service is a significant consideration for developing, testing, or implementing models of new technology acceptance and use. One theory is that behavior is directly influenced by experience [77]. The associations seen in subject pool research, according to Fazio and Zanna, provide a model of how experience may affect attitude-behavior consistency via influencing trust [78]. According to Venkatesh’s article, people modify their system specific PE to reflect their interactions with the system as they have more firsthand experience with it [48].

In addition, Chin & Hsi’s study on the gaming experience and how it affects players’ behavior looks like this: The degree to which users participate in an online gaming activity with involvement, enjoyment, control, focus, and intrinsic interest is known as the flow experience [47]. Based on their experiences, people’s responses to an IT system evolved throughout time. Numerous studies have noted that experience is a significant factor that influences the system’s degree of TR [79, 80]. In year 2000, Venkatesh suggested that although users’ judgements are guided by experience, they can be changed and rearranged once they try the system in real life, gaining confidence in the technology [48]. Experience plays a crucial role in gaining the trust of customers in any technological adaptation. This paper assumes that EX and TR are directly correlated based on all the findings to support EX plays a vital role in building TR toward AI chatbot systems in Sri Lanka’s public services. The suggested model will be impacted by this relationship. Therefore, it is hypothesized that:

Hypothesis 8 (H8): User experience has a positive and significant impact on trust toward generative AI chatbot system acceptance in public services

A more comprehensive framework for assessing user behavior and enhancing technological integration can be made possible by these constructs, which can better account for the unique potential and constraints of deploying virtual assistants in organizational contexts. Also, this type of research can be identified as the most reliable and allowing immediate application due to the ability to get valid research results [81].

To evaluate their influence on the acceptance of generative AI chatbot systems in the public sector, several external user behavior indicators were identified for this study. The acceptance and effectiveness of generative AI technology are directly affected by these indications, which help to figure out whether residents or government employees will decide to interact with generative AI chatbot systems. Several factors influence users’ decisions to use generative AI chatbot systems in public services, including perceived utility for enhancing service delivery, simplicity of use, responsiveness, and system confidence and trustworthiness.

Moreover, the acceptance of generative AI chatbot systems in government is influenced by the above described three key external constructs: trust, legal/ regulatory support, and user experience. Trust is critical, as users must feel confident in the system’s security and accuracy. Legal/ regulatory support shapes user trust towards behavior, as individuals are more likely to adopt generative AI chatbot systems if they observe it is trustworthy and bound with the legal and regulations and protected users by law. The EX of generative AI chatbot system must be intuitive and appealing to foster more engagement as well as user trust on how to use such technological applications.

These external constructs reflect the environmental factors that, along with contextual factors, influence the adoption and acceptance of innovative technologies. The suggested conceptual model “augmented TAM” for sustainable generative AI chatbot system acceptance in public sector in Sri Lanka (Figure 2) shows how TAM is integrated with important external constructs, including TR, LR and EX.

Research Methods

Questionnaire development

In this study, all the questions in the developed questionnaire were presented with the same wording and order to guarantee the consistency of the constructs and measurement items. The measurement items for TAM constructs (BI, AT, PU, PE) were carefully operationalized based on established scales from [18]. Also, the measurement items for TR, LR, and EX were thoroughly operationalized based on established scales from prior validated studies [53, 54, 55, 56, 57, 58, 60, 61, 62, 63]. Moreover, most questions were designed using a Likert scale, unless otherwise stated. A five-point Likert scale was selected. The structured online questionnaire consisted of four main sections, and two sets of Likert scale descriptors were mainly used; namely from 1 “Strongly Disagree/ Strongly Agree” to 5. The first section is about the brief introduction about this research and information to respondents, and the respondents to provide their personal information in the second section. This includes their gender, age, highest level of education, job/ service category, computer experience & skills, and online/ mobile application usage or experience (public/ private sector). Then the third section is to indicate the extent of various external influencing constructs on generative AI chatbot system acceptance in public services. Lastly, section four is designed to indicate the extent of influencing constructs with TAM on generative AI chatbot system acceptance in public services.

Moreover, several ethical considerations were taken to ensure the participants’ privacy and safety. Participants were informed about confidentiality, anonymity, and the protection of their privacy. Also, participation was entirely voluntary, and informed consent was collected from all participants prior to starting the survey response.

Pilot Study

We performed pilot research with a sample of 40 respondents, including both regular citizens and government employees, prior to starting full-scale data gathering. Evaluating the questionnaire items’ thoroughness, applicability, and clarity was the goal. Cronbach’s alpha (CA) was used in an initial reliability investigation to make sure the scales were internally consistent. The language and formatting of the items were improved for clarity based on participant comments. To increase the questionnaire’s accuracy and guarantee that the scales appropriately represented the components we looked at in our study, a few minor changes were made.

Data collection

Data collection was done using online Google form for four-months duration. A total of 412 respondents from public service users were included in this analysis. The sample mainly consisted of public sector employees because they could interact with generative AI chatbot systems as both employees and citizens, while private sector users were included to ensure broader applicability of findings to Sri Lanka’s entire digital population. By incorporating both groups, the study ensures that generative AI chatbot system acceptance is evaluated from both an institutional and a public-user perspective, capturing a comprehensive acceptance picture. Table 2 presents the composition of the sample, with the user intention on the acceptance of generative AI chatbot system in public sector organizations in Sri Lanka. The observation shows that males constituted 46.36% of the collected data while only 53.64% were females. Also, it shows that the age comparison of respondents Below 20 (0.49%), 20-29 (9.95%), 30-39 (50.97%), 40-49 (27.43%), 50 and Above (11.17%).

Table 2.

Basic statistics of the sample

Additionally, most of their occupation of respondents reported from the public sector (72.57%), the private sector was only 24.27%, and the unemployment percentage is 3.16%. In addition, the highest educational level of the respondents was represented by 51.46% of graduate first degree holders, followed by 29.85% of Masters’ or higher degree holders; but high school level respondents were only 18.69%. The frequencies of IT usability and knowledge of the respondents were none (1.46%), very limited (1.46%), some experience (45.39%), quite a lot (36.65%), and extensive (15.05%). This clearly shows that most of the respondents have enough IT usability knowledge to use such modern technology. Moreover, the frequencies of mobile application use experience of the respondents were none (1.94%), very limited (1.94%), some experience (39.32%), quite a lot (37.86%), and extensive (18.93%). This demonstrates unequivocally that most respondents have sufficient experience using mobile applications to make use of such cutting-edge technological solutions.

Measurement model and validity

Every measurement item needs to have its validity, reliability, and factor loading assessed. A measure’s consistency is its reliability. When a measure yields consistent results under consistent circumstances, it is deemed trustworthy [82]. For each item loading to be deemed reliable, the value must be equal to or greater than (0.5). Furthermore, CA values for composite dependability must equal or exceed (0.7). As mentioned in (see Table 3), all indicators are reliable and satisfy the set criteria for factor loading and CA.

Table 3.

Measurement model factor loadings, reliability and internal consistency

| Factor | Code | Description | Loading | AVE | CR | CA |

| Trust | TR1 | Generative AI chatbot systems are trustworthy | 0.800 | 0.549 | 0.827 | 0.765 |

| TR2 | Generative AI chatbot systems providers give the impression that they keep promises and commitments to information provided | 0.765 | ||||

| TR3 | Generative AI chatbot systems providers keep my best interests in mind. | 0.809 | ||||

| TR4 | AI chatbot systems can address my issues | 0.563 | ||||

|

Legal/ regulatory support | LR1 | I think the government support of introducing laws and regulations for generative AI technologies would provide an incentive to use AI chatbot systems on public portals | 0.785 | 0.536 | 0.821 | 0.770 |

| LR2 | I think government regulations and monitoring would reduce the risks associated with using AI chatbot systems on public portals. | 0.677 | ||||

| LR3 | I think the government should support and/or be responsible for regulating the use of generative AI technologies such as AI chatbot systems on public portals. | 0.745 | ||||

| LR4 | I think regulations and government insurance should exist to protect the users of generative AI technologies such as AI chatbot systems on public portals. | 0.716 | ||||

| User experience | EX1 | I have good experiences and adequate knowledge to use generative AI chatbot systems clearly. | 0.782 | 0.508 | 0.752 | 0.654 |

| EX2 | I use online applications rather than offline channels for information inquiring from public agencies (i.e., Telephone, postal mail). | 0.779 | ||||

| EX3 | My previous experience and knowledge of conducting different online applications motivate me to use generative AI chatbot systems in public services. | 0.554 | ||||

| Perceived ease of use | PE1 | I think learning to operate generative AI chatbot systems would be easy for me | 0.777 | 0.546 | 0.782 | 0.756 |

| PE2 | I believe it would be easy to get Generative AI chatbot systems to accomplish what I want to do. | 0.681 | ||||

| PE3 | It is easy for me to become skillful at using this generative AI chatbot system. | 0.754 | ||||

|

Perceived usefulness | PU1 | Using this generative AI chatbot system would improve the quality of public service. | 0.788 | 0.589 | 0.851 | 0.900 |

| PU2 | Using this generative AI chatbot system would increase my productivity. | 0.675 | ||||

| PU3 | Using this generative AI chatbot system would save time on getting public information and services. | 0.815 | ||||

| PU4 | I believe this generative AI chatbot system application is useful for delivery of public services online to citizens. | 0.785 | ||||

| Attitude | AT1 | It is a good idea to use generative AI chatbot systems in public sector | 0.812 | 0.722 | 0.912 | 0.919 |

| AT2 | It is wise to use generative AI chatbot systems in public sector | 0.819 | ||||

| AT3 | I like to use generative AI chatbot systems in public sector | 0.762 | ||||

| AT4 | It is pleasant to use generative AI chatbot systems in public sector | 0.817 | ||||

|

Behavioral intention | BI1 | If I have access to this generative AI chatbot system, I intend to use it. | 0.858 | 0.637 | 0.840 | 0.815 |

| BI2 | If I have access to this generative AI chatbot system, I will use it. | 0.893 | ||||

| BI3 | I plan to use generative AI chatbot systems within the next 6 months. | 0.830 | ||||

|

Model fit indices |

CMIN=534.898, DF=254, P=0.000, CMIN/DF=2.106, RMSEA=0.073, TLI=0.868, CFI=0.897 | |||||

Additionally, the average variance extracted (AVE), which is the grand mean value of the squared loadings of the items relevant to the construct, is the typical metric for proving convergent validity. Validity is the degree to which a construct’s indicators jointly measure. To this extent, a latent construct explains the variation of its indicators. When the AVE value is 0.5 or higher, it indicates that the construct explains over half of the variation of its elements [82, 83]. As described in (see Table 3), the CA values and composite reliability values are bigger than (0.7), and the AVE values are greater than (0.5). This proves the convergent validity of the constructs.

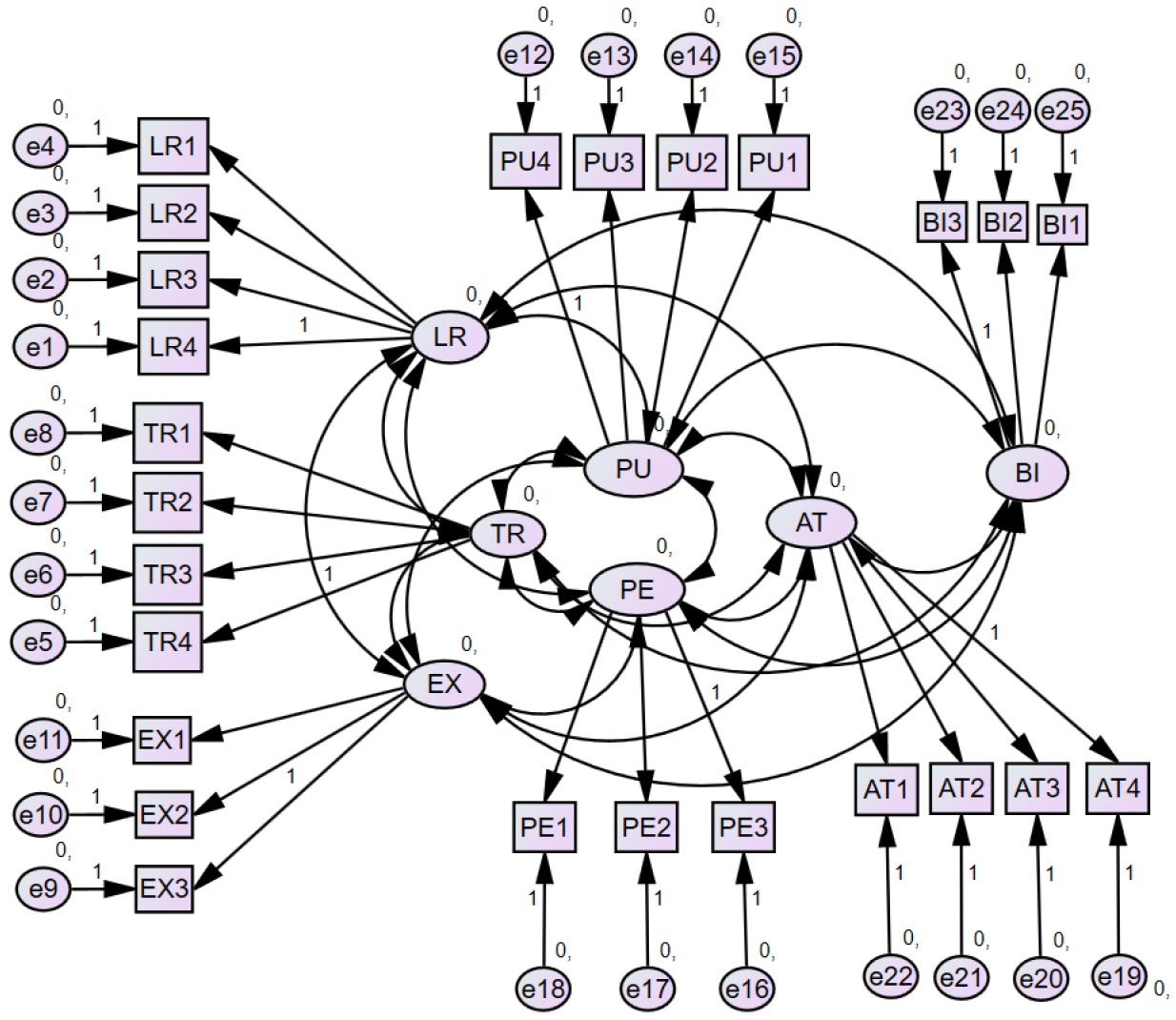

It was found that the model fit indicator values satisfied the evaluation criteria for the overall model fit; CMIN=534.898, DF=254, P=0.000, CMIN/DF= 2.106, RMSEA=0.073, TLI=0.868, CFI=0.897. CA and CR ratings were used to assess construct consistency. Moreover, CA, with recommended values of 0.7 to 0.8, measures how well a set of objects represents a unidimensional latent concept. Composite reliability, with recommended values greater than 0.7, assesses the dependability of indicators connected with a specific element. While both metrics reflect internal consistency, CR is preferable to CA for construct-level assessments in SEM analysis. In this study, CA values ranged from 0.654 to 0.919, and CR values ranged from 0.752 to 0.912 for all constructs, confirming that they exhibit acceptable internal consistency.

Data analysis

An intensive literature review was conducted to select and validate the most extensively accepted measurement items for the study. Several analyses were conducted to check the reliability and validity of each construct. For the analysis of this study, the covariance-based structural equation model (CB-SEM) approach was applied using IBM SPSS AMOS software (V23). The methodological technique involved evaluating both measurement and structural models. The structural model examined latent variable associations, whereas the measurement model assessed reliability and validity. The reflective measurement model was evaluated based on indicator loadings, internal consistency reliability (CA and CR), convergent validity (AVE), and discriminant validity (Fornell-Larcker criterion). Meanwhile, the structural model was evaluated using path coefficients and p-values.

Results

The theoretical model of this research was based on the CB-SEM approach. The data analyzed in IBM SPSS AMOS (V.23) resulted in two models. a measurement model (see Figure 3), which evaluates the reliability of the constructs, validity, and model fit, and a structural model, which addresses the hypotheses related to the successful generative AI chatbot system acceptance in Sri Lanka public service.

Measurement model assessment - Discriminant validity and cross loadings

The Fornell and Larcker approach is used to evaluate discriminant validity, which is a crucial aspect of measurement model reliability and validity. This method compares the square root of the AVE to the correlations between constructs. The diagonal value must be bigger than the correlations between constructs. According to Table 4, all the constructs fulfill the requirement.

Table 4.

Discriminant validity is based on Fornell and Lacker criterion

| TR | LR | EX | PE | PU | AT | BI | |

| TR | 0.741 | ||||||

| LR | 0.348 | 0.732 | |||||

| EX | 0.450 | 0.609 | 0.713 | ||||

| PE | 0.459 | 0.612 | 0.781 | 0.739 | |||

| PU | 0.330 | 0.586 | 0.639 | 0.653 | 0.767 | ||

| AT | 0.219 | 0.483 | 0.335 | 0.399 | 0.600 | 0.798 | |

| BI | 0.261 | 0.422 | 0.582 | 0.543 | 0.659 | 0.400 | 0.850 |

Also, variance inflation factor (VIF) values were computed utilizing the squared multiple correlations (SMC) acquired from AMOS to evaluate multicollinearity among the latent components. The findings show that all constructs’ VIF values (TR = 1.35, PE = 1.10, PU = 1.74, AT = 1.63, BI = 1.20) are significantly below the suggested cutoff of 5. VIF values less than 5 indicate that multicollinearity is not an issue [84]. As a result, the constructs in this study are independent, guaranteeing the objectivity of the structural model’s prediction correlations.

To estimate the cross loadings, the loading of each indicator should be higher than the loadings of its corresponding variables’ indicators. According to (Table 5), the cross-loadings criterion is perfect; most of the items have a value more than (0.7) and their highest value is when compared with other items.

Table 5.

Cross loadings results

Structural model assessment

The structural model was evaluated by calculating the difference between dependent variables. The structural model is estimated primarily by using path coefficients. In a structural model, a path coefficient quantifies the relationship between two variables. It indicates the extent to which a change in one variable affects the other, measured in standard deviation units. These coefficients typically range from -1 to 1. The values closer to -1 represent a strong negative relationship and the values approaching 1 indicate a strong positive association. Accordingly, the path coefficients in the proposed model suggest that the constructs exhibit predominantly strong positive relationships and reinforcing the validity of the theoretical framework.

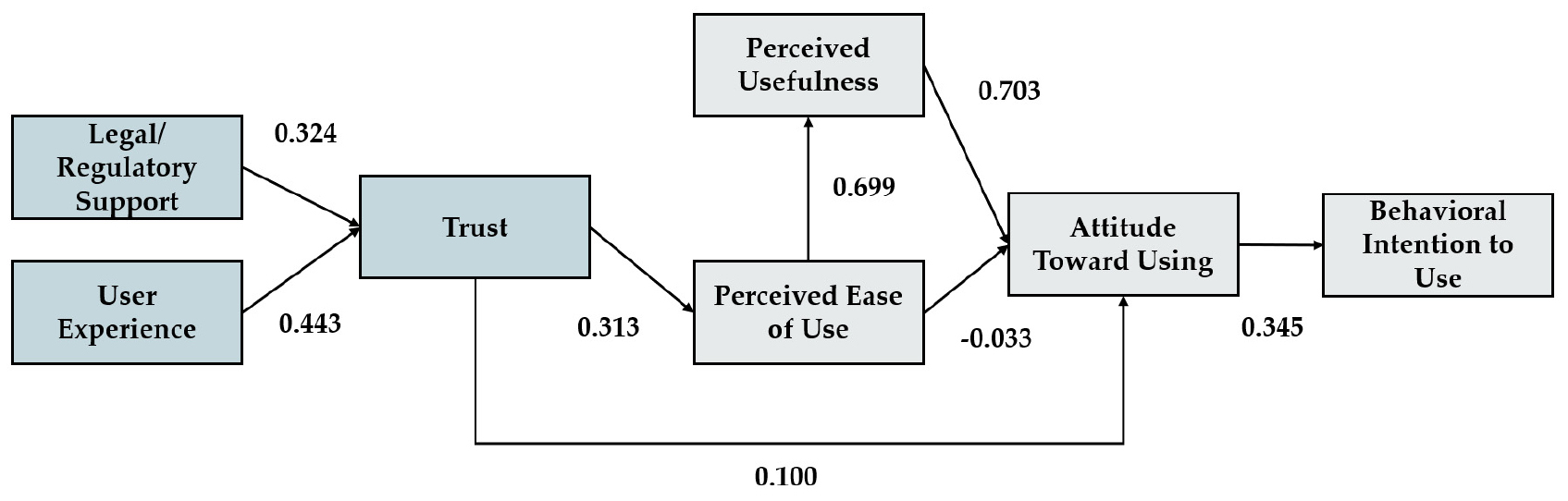

According to the path analysis (see Figure 4), Table 6 found each hypothesis by estimating the p-values and the path coefficients. It is visible that six hypotheses are supported, while the remaining two are not supported, which in turn indicates that most of the paths are significant between independent and dependent variables.

Table 6.

Hypotheses test results

| Hypothesis | Path | Standard Estimates | Standard Error | Critical Ratio | p-Value |

| H1 | BI ← AT | 0.345 | 0.051 | 6.753 | *** |

| H2 | AT ← PU | 0.703 | 0.095 | 7.444 | *** |

| H3 | AT ← PE | -0.033 | 0.092 | -0.356 | 0.722 |

| H4 | PU← PE | 0.699 | 0.071 | 9.854 | *** |

| H5 | AT ←TR | 0.100 | 0.063 | 1.587 | 0.112 |

| H6 | PE ← TR | 0.313 | 0.065 | 4.807 | *** |

| H7 | TR ← LR | 0.324 | 0.072 | 4.497 | *** |

| H8 | TR ← EX | 0.443 | 0.080 | 5.548 | *** |

The results of this paper found that AT (β = 0.345, CR = 6.753, p < 0.001) has a positive and significant impact on BI, suggesting that Hypothesis 1 is supported in this analysis. It clearly describes; how AT has been found to have a significant favorable impact on the BI of users who plan to utilize generative AI chatbot systems in Sri Lanka public sector.

Also, PU (β = 0.703, CR = 7.444, p < 0.001) has a positive and significant impact on AT, supporting Hypothesis 2. On the other hand, PE (β = −0.033, CR = −0.356, p = 0.722) has a negative and not satisfactory significant impact on AT, not supporting Hypothesis 3. Three constructs, PU, PE, and TR show mixed effects on the user AT toward sustainable generative AI chatbot system acceptance in the public sector. These effects are related to the user’s trust and beliefs. User AT is very important to make an influence on BI and action towards acceptance of new technologies. PU, PE, and TR constructs affect AT differently. According to the analysis results higher TR will increase in PE, and PE will increase PU, and then PU will increase user AT positively and motivate them to act positively to use generative AI chatbot systems in Sri Lanka public sector. Therefore, TR construct shows a clear indirect relationship impact on AT.

Although PE (β = 0.699, CR = 9.854, p < 0.001) has a positive and significant impact on PU, supporting Hypothesis 4 and it clearly explains the path between PE and PU. The relation between these two constructs is very strong, it is the core reason for the user’s AT toward generative AI chatbot system acceptance. PE positively influences the users PU; minimizing the technology complexity increases the belief that generative AI based applications are efficient to use and helpful for public services.

However, TR (β = 0.100, CR = 1.587, p = 0.112) has a positive impact on AT, this result is not statistically significant, thus not supporting Hypothesis 5. The relationship between TR and PE is also positive and significant (β = 0.313, CR = 4.807, p < 0.001), supporting Hypothesis 6. This explains the connection between PE and TR. According to this study, TR is an important notion that influences other concepts and consumer decisions. Risk and trust have an inverse relationship, as TR increases, the assessed risk decreases. Building TR is essential for increasing confidence in modern technology and its capacity to be used more efficiently and with less effort. PE is a key component of this model that defines the level of complexity of generative AI chatbot systems for usage in the public sector.

With respect to TR, LR (β = 0.324, CR = 4.497, p < 0.001) has a positive and not significant impact on TR, supporting Hypothesis 7. It outlines the relationship between LR and TR. The results demonstrate that LR has a favorable impact on the TR for the generative AI chatbot system acceptance; a well-established legal/ regulatory system for generative AI chatbot systems enhances user TR and ultimately this satisfaction will lead to positive interaction to use such technological tools.

Similarly, EX (β = 0.443, CR = 5.548, p < 0.001) has a positive and significant impact on TR, supporting Hypothesis 8. This result describes the path between EX and TR. This path has a positive effect from EX on TR, which explains that EX has a significant relationship with modern technological applications and services positively in the public sector. Also, the relations and prior experience in use of technological applications and similar services have a significant impact and motivation to build TR and use new technological applications in public sector organizations.

Direct effect, indirect effect, and total effect

The significance of the mediated effect is accessed by a bootstrapping method. It was employed to derive the direct, indirect, and total effects in this model. The results (Table 7) show that TR directly influences PE (β = 0.313, p < 0.01) and indirectly influences PU (β = 0.219, p < 0.01) and BI (β = 0.084, p < 0.01), confirming its mediating role in generative AI chatbot system acceptance. Additionally, EX has a strong effect on TR (β = 0.443, p < 0.01) and indirectly influences PE (β = 0.139, p < 0.01), PU (β = 0.097, p < 0.01), AT (β = 0.108, p < 0.01), and BI (β = 0.037, p < 0.01). Furthermore, LR directly influences TR (β = 0.324, p < 0.01) and indirectly effects PE (β = 0.101, p < 0.01) and PU (β = 0.071, p < 0.01), which indirectly contribute to AT formation and acceptance intention. These findings highlight that TR, LR, and EX significantly shape the acceptance of generative AI chatbot systems in public services in Sri Lanka.

Table 7.

Results of total, direct, and indirect effect

Discussion

The integration of AI, particularly generative AI chatbots, has surged within the public sector, offering innovative solutions for enhancing citizen engagement and streamlining services in recent years. However, the acceptance of these technologies has faced significant challenges, as many users exhibit resistance to interacting with generative AI chatbot systems. This paper aims to evaluate deeply on user trust of generative AI technology and identify the related factors influencing user acceptance in public administration service delivery contexts based on Sri Lanka environment and design and validate new research model (augmented TAM) for the successful generative AI technological acceptance which can be used for future initiatives in developing countries. Given the increasing prevalence of generative AI tools and the limited existing research to guide this inquiry, the development of a new model seeks to provide fresh insights and support the broader acceptance of generative AI chatbot systems. A survey was conducted among a diverse group of public service users, employing the CB-SEM approach to analyze the collected data.

The hypothesis H1 suggests that a positive attitude towards generative AI chatbot systems directly influences the user’s BI to adopt these systems within public services. In other words, if individuals, such as government employees or citizens interacting with public services, hold a favorable view of generative AI chatbot systems, they are more likely to support and utilize them. The stronger the positive attitude towards BI to use and accept will encourage the acceptance of generative AI chatbot systems in the public sector. This is because individuals are generally more inclined to embrace new technologies they perceive as beneficial and aligned with their needs and expectations. Also, this study results support that the interaction and experience can shape attitudes and behaviors in significant ways [85].

Hypothesis H2 explained that PE positively influences attitudes towards their acceptance in generative AI chatbot systems public services. In simpler terms, if government employees and citizens find generative AI chatbot systems easy to use, they are more likely to have a positive attitude toward adopting them. This section will delve into this hypothesis, drawing upon previous research results to support and explain this relationship. The TAM [35] suggests that PE significantly influences AT towards technology and the results of this study confirms the previous literature. When users perceive generative AI chatbot systems as easy to understand and operate, they are more likely to develop a positive attitude towards adopting new technology.

Hypothesis H4 suggests that PE directly and positively influences the PU of generative AI chatbot systems in public services. PU refers to the extent to which individuals believe that using generative AI chatbot systems will enhance their job performance or quality-of-service delivery. As a result, individuals are more likely to recognize generative AI chatbot systems as useful tools that can improve efficiency, and overall effectiveness within public services. This also aligns with results and suggestions of TAM [35]. When generative AI chatbot systems are perceived as easy to use, individuals are more likely to recognize their potential benefits and value. Therefore, PE can be identified and confirmed as an important factor in technology acceptance with the results of the study.

The survey results revealed that several new factors significantly influence users BI towards generative AI chatbot system acceptance in public service delivery. TR factor can motivate individuals to adopt technology according to the findings of a research [65]. An empirical evaluation of technology acceptance model for AI in e-commerce study confirmed the positive impact of TR to PE [86]. Similarly, the findings of this study highlight that TR has a strong direct impact on PE, while PE directly impacts PU, which positively impacts user AT and decision making, ultimately leading to a change of users’ BIs to an acceptance of generative AI chatbot systems in public service delivery confirming hypothesis H6. Initiatives like the personal data protection and the digital government 2030 in Sri Lanka are designed to increase confidence in digital services, which may tangentially improve usability perceptions. Furthermore, as citizens expect accountability and fairness in the provision of public services, trust in generative AI systems is very important. citizens are more likely to view generative AI chatbot systems as fluid and intuitive if they think they operate consistently and impartially. This implies increasing PE and, eventually, speeding up the acceptance of generative AI chatbot systems in Sri Lanka’s generative AI transformations for smart cities and smart government plans.

Also, the results of this study indicate that the new external construct, LR, has a strong impact on TR and plays a crucial role in fostering trust confirming hypothesis H7. Respondents largely expressed confidence in their safety and trustworthiness when interacting with these generative AI-driven systems in public sector in Sri Lanka based on the availability of strong legal and regulatory frameworks. The lack of formal generative AI laws and regulations in Sri Lanka breeds ambiguity and may erode public confidence in generative AI chatbot systems. To increase confidence and encourage responsible generative AI chatbot system acceptance in Sri Lanka, it is imperative that complete set of laws and regulation framework for generative AI initiatives be passed, transparency be encouraged, ethical principles be established, public awareness be raised, and stakeholder engagement be encouraged. Moreover, the study results confirm hypothesis H8 with EX’ positive influence on TR when focused on the acceptance of an acceptance of the use of generative AI chatbot system in public sector organizations. Therefore, it confirms the importance of user experience to build trust on such technological movements to enhance acceptance in Sri Lankan society.

However, Hypotheses H3 is not accepted according to the results of this study. Hypothesis H3 explains the relationship between PE to AT. The TAM [35] suggested that PE significantly influences AT towards technology acceptance. Therefore, we hypothesized that if technology is perceived as easy to use, users would develop a more favorable AT toward adopting it. The failure to prove this correlation may exhibit the nature of generative AI transformations for smart cities and smart government plans in Sri Lanka, where citizens might be less concerned with PE due to a higher degree of trust on generative AI chatbot systems. Additionally, many government employees and citizens may already have experience with emerging technology initiatives and e-services from the private sector and, as a result, the PE due factor might not be as influential on their attitude as originally expected in the Sri Lankan public services context.

Likewise, Hypothesis H5 explains the relationship between TR and AT. Previous studies have shown that trust is crucial for technological acceptance, especially when it comes to data security and privacy. It was found to be a key factor in determining how users feel about embracing new technology. However, the study’s findings indicate that there is no substantial correlation between AT and TR, which may be because of the unique conditions of Sri Lanka’s generative AI transformation environment. Citizens may have adopted pragmatic attitudes towards new technology, emphasizing usability and accessibility over trust concerns, in a nation where e-government services are still being integrated and handled. The significance trust plays in influencing individuals’ attitudes towards the acceptance of digital government solutions may also be understated by the standing and track record of service delivery of public agencies.

Conclusions

Theoretical implications

This research study makes a meaningful contribution by demonstrating that TAM [18, 35]. The new research model is sufficiently strong enough to explain how we can extend the TAM model with external factors such as trust, legal/ regulatory support, and user experience to identify the acceptance level of latest technology in different environments. The new research model was built around eight hypotheses and focused on analyzing the relationships between the mentioned external factors and existing TAM core factors. The results proved that most of the hypotheses proposed (except hypotheses H3, and H5), evidencing the positive influence of TR, LR, EX indirectly on several constructs towards to BI on acceptance of generative AI chatbot systems in Sri Lanka public sector.

Practical implications

The survey results revealed several new factors, especially user TR, which significantly influence users’ BI towards generative AI chatbot system in public service delivery. The findings highlight that user trust can be identified as a key external factor affecting existing TAM model [18, 35] towards user BI and decision making, ultimately leading to greater acceptance of generative AI chatbot systems in Sri Lanka public service. At the same time, LR and EX will make a positive impact on user TR.

Secondly, the LR on new research model demonstrates how a well-structured regulatory framework boosts user confidence, trust by assuring transparency, accountability, and security. When explicit legal rules control data protection, privacy, and ethical generative AI use, public sector staff and citizens are more likely to rely on generative AI chatbot systems for government tasks and services. Furthermore, transparency in law might mitigate concerns about privacy violations, bias within algorithms, and decision-making transparency. Since generative AI transformations for smart cities and smart government plans in Sri Lanka have already begun recently, the availability of strong cybersecurity laws, generative AI ethics, integrity regulations, and compliance standards ensures that generative AI driven systems serve the Sri Lanka citizens by providing public services using modern technologies. Furthermore, legal support systems, such as grievance handling procedures and regulatory supervision institutions, can help to build user trust by ensuring accountability in times of AI failures or misconduct. In addition, user trust is strengthened by legal/ regulatory support, and it can strengthen user association, decrease resistance to generative AI technological systems acceptance, and improve public services effectiveness. This highlights the importance of laws and policy makers constantly updating and refining legal frameworks in response to technological changes. Safeguarding the ethical generative AI governance would enhance citizen trust while also contributing to the long-term viability of generative AI initiatives and generative AI transformation in Sri Lanka.

Finally, this study indicates that user experience had a meaningful relationship with user trust towards the intention of user behavioral intention to use generative AI chatbot systems in Sri Lanka public services. This shows that how people interact with and perceive generative AI chatbot systems are critical for fostering trust and a level of acceptance. A positive user experience can be defined by understandability, efficiency, accuracy, responsiveness, and natural design, enhances confidence in using the generative AI chatbot system, making users more probable to trust in it for public service engagements with new technologies. In Sri Lanka’s public sector, where the level of digital literacy fluctuates, the generative AI chatbot system must provide a smooth and user-friendly interface and experience to accommodate various user groups. Users will trust generative AI chatbot systems if they grant clear communication, tailored responses, and error-free service delivery. Furthermore, reducing technical difficulties, supporting multi language adaptability (Sinhala, Tamil, and English), and holding 24 hours availability can all improve the user experience positively, leading to expand confidence and trust of the users. Furthermore, positive previous experiences with AI chatbot systems, especially similar technological experience from public services, might advance familiarity and reduce uncertainty. When people frequently discover accuracy, security, and transparency, they earn trust in AI chatbot systems withing public services. Thus, investing in user-centric design, constant improvement of the system, and feedback-driven developments are essential for growing AI chatbot system acceptance in Sri Lanka’s public service delivery and future generative AI transformations for smart cities and smart government plans.

Accordingly, as a developing country, Sri Lanka’s government and policymakers should evaluate the main findings of this study for the long-term feasibility of generative AI transformations projects in the public sector. The deployment of current technological advances, such as AI chatbot system applications, must take “Consumer Trust” especially into account, backed up by government laws/ regulations and providing positive motivational user experience in same arena. Also, the Sri Lankan government should encourage and regulate the use of any generative AI-based applications, as well as implement appropriate laws and regulations to limit usage, which would ultimately contribute to the development of user trust for Sri Lanka generative AI transformations for smart cities and smart government concept.

Limitations and future research

There is a limitation to the proposed approach with the research framework given. This model has only been created to measure the user’s intention, not the actual usage rate of such advanced technology. This gives future studies guidance on how to boost our findings with real usage data. Also, it’s also crucial to remember that expanding the sample size to include considerable number of consumers of Sri Lankan public service. It will enhance the study’s findings even more.